Prof. Dr. Gerit Wagner

(2026-04-20)

Categories of ML: Supervised vs. Unsupervised (10 min)

TBD: ## Categories in Machine Learning

Brief reminder: logistic regression from previous lecture

Motivation for today:

Note/TBD: focus on supervised machine learning.

TODO: mention focus on primarily (binary) classification tasks

TODO: add a definition (and intorductory example)

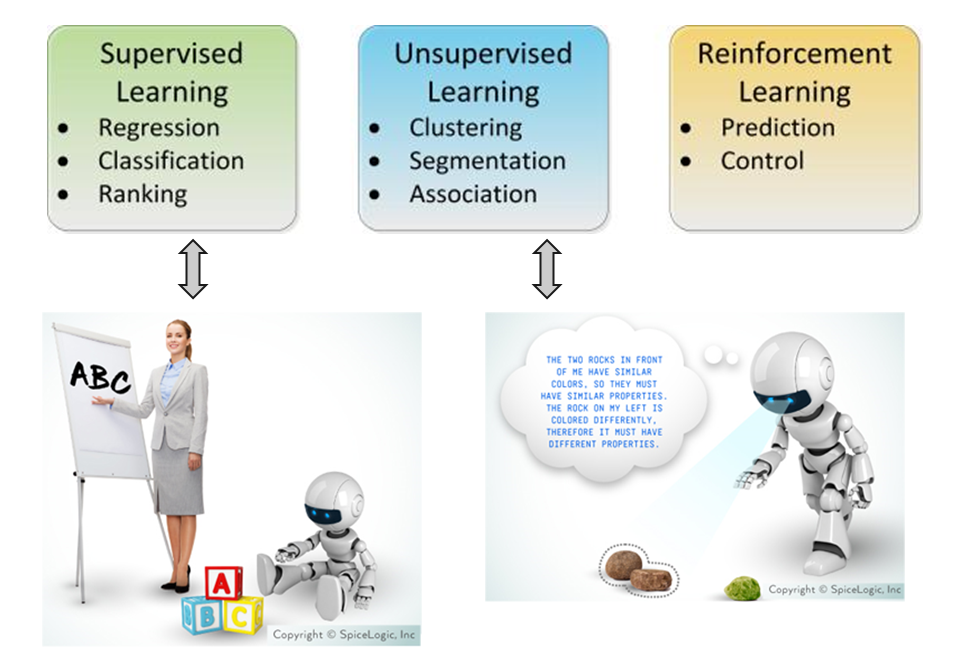

Statistics about finding valid conclusions about the underlying applied theory, and on the interpretation of parameters in their models. It insists on proper and rigorous methodology, and is comfortable with making and noting assumptions. It cares about how the data was collected and the resulting properties of the estimator or experiment (e.g. p-value). The focus is on hypothesis testing.

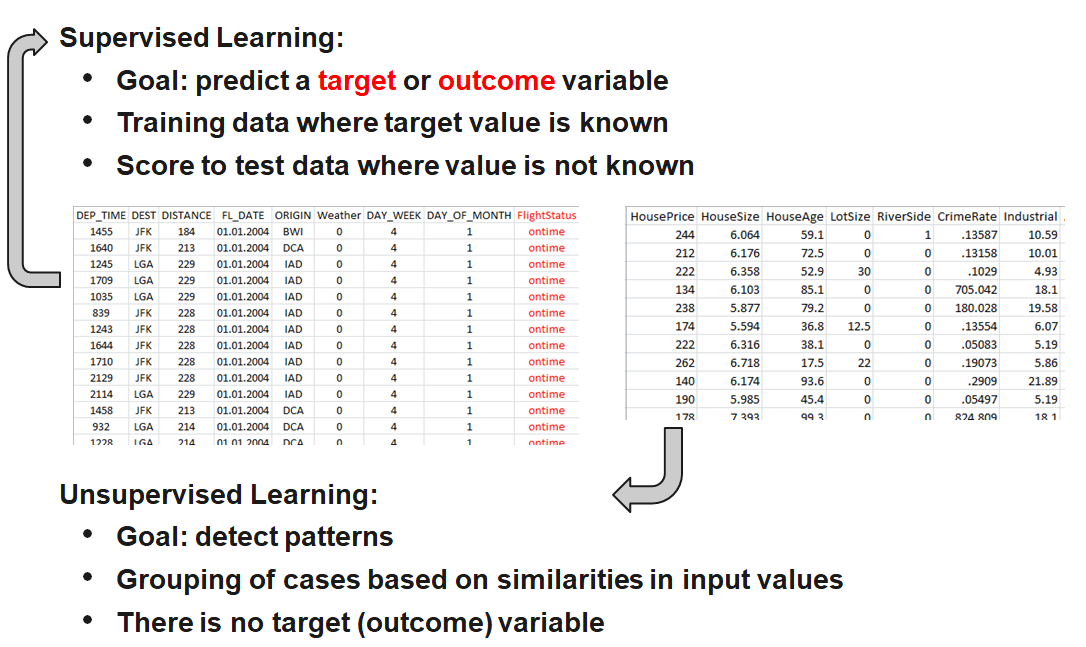

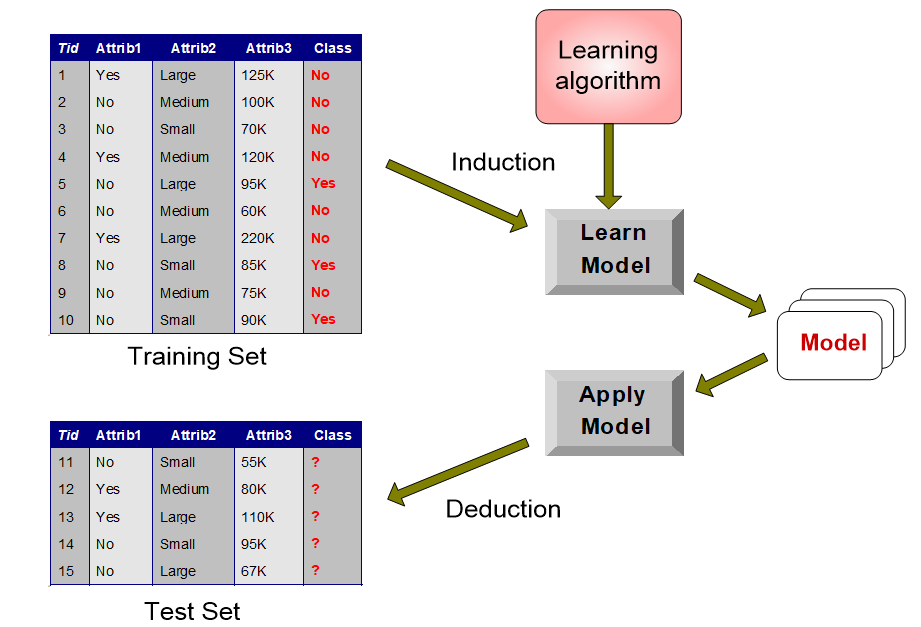

Machine Learning (ML) aims to derive practice-relevant findings from existing data and to apply the trained models to data not previously seen (prediction). It tries to predict or classify with the most accuracy. It cares deeply about scalability and uses the predictions to make decisions. Much of ML is motivated by problems that need to have answers. ML is happy to treat the algorithm as a black box as long as it works.

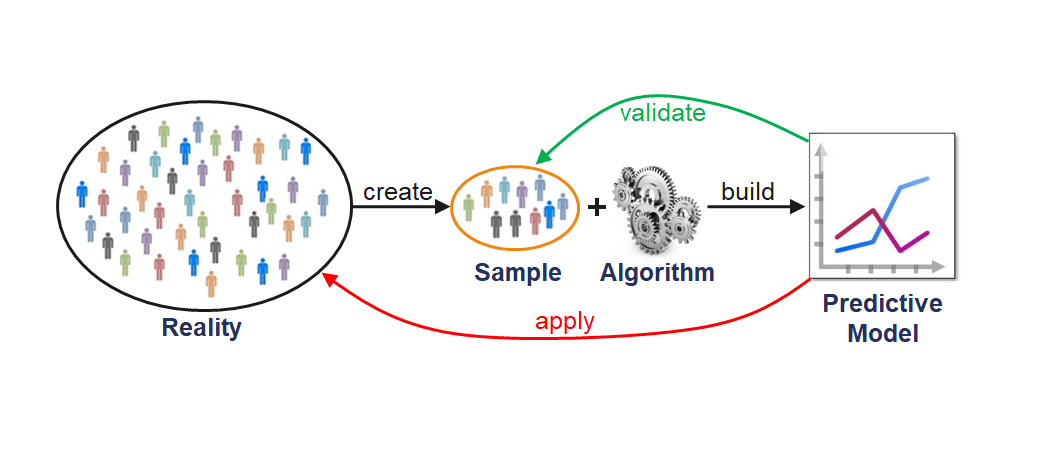

Traditional Analytics Process

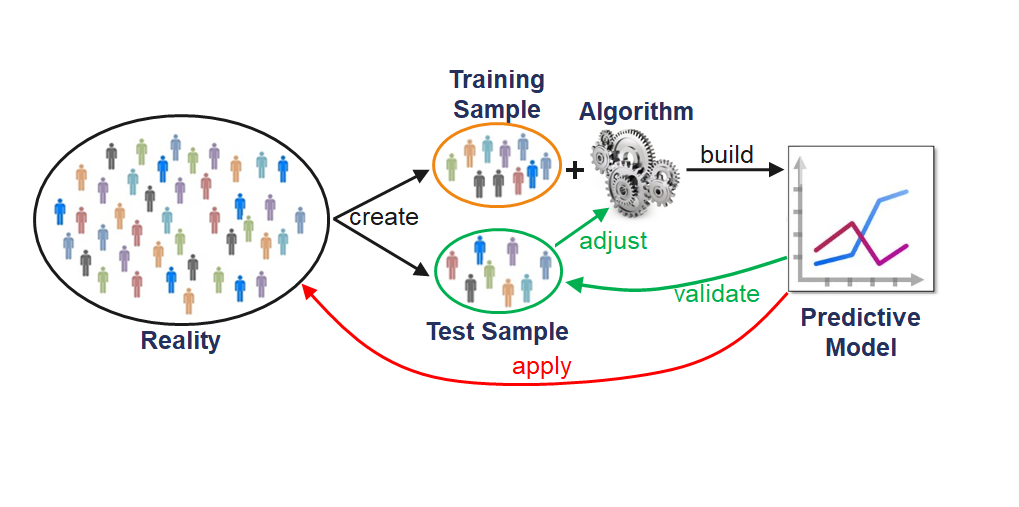

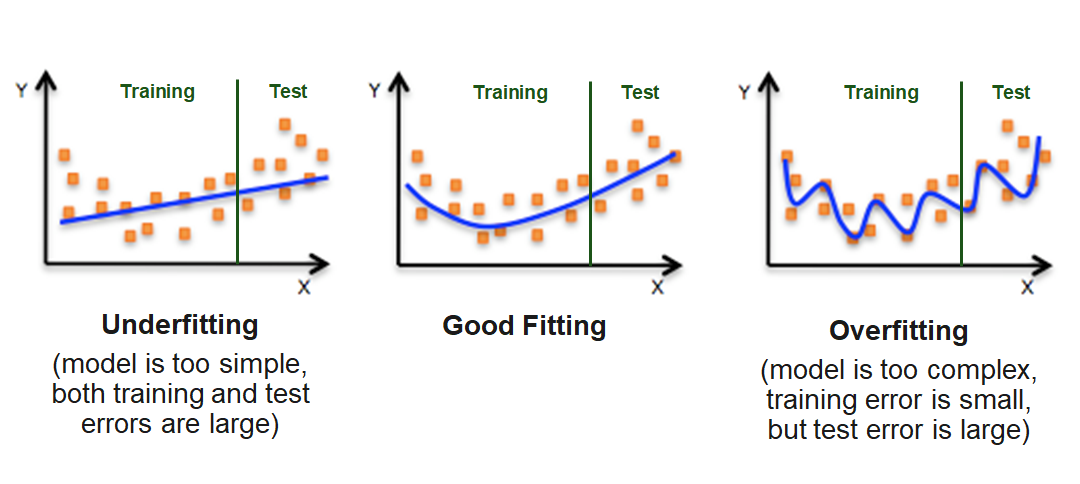

Note: large ML models may create overfitting issues… -> need for separate test set

State the generalization problem very clearly.

Ask students for ideas

Introduce the high-level pipeline (Modern Analytics Process)

2.1 Labeled Data - Input features (X) and target labels (y) - Importance of data quality and representativeness

2.2 Feature Engineering - Transformations, encoding, normalization - Why features matter more than the model itself

2.3 Train–Test Split - Why we split data into training and testing sets - Preventing information leakage - Hold-out, cross-validation (brief mention)

2.4 Model Training - Fit model on training data - Conceptual: “learning patterns/parameters”

2.5 Evaluation on Test Data - Predict on unseen data - Establish generalization performance

2.6 Applying the Model Beyond the Known Sample - Using the model in decision-making or prediction for future cases

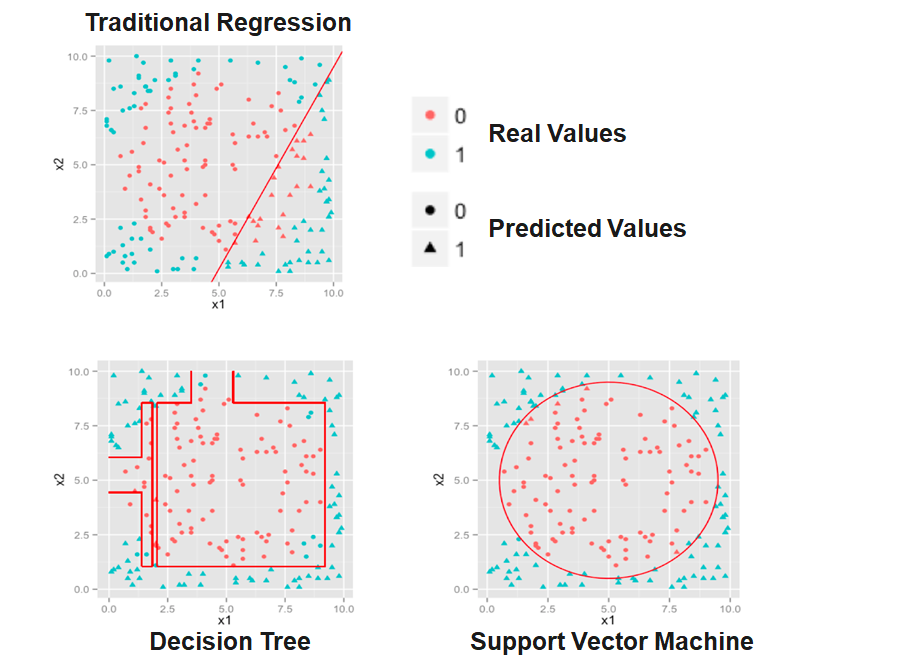

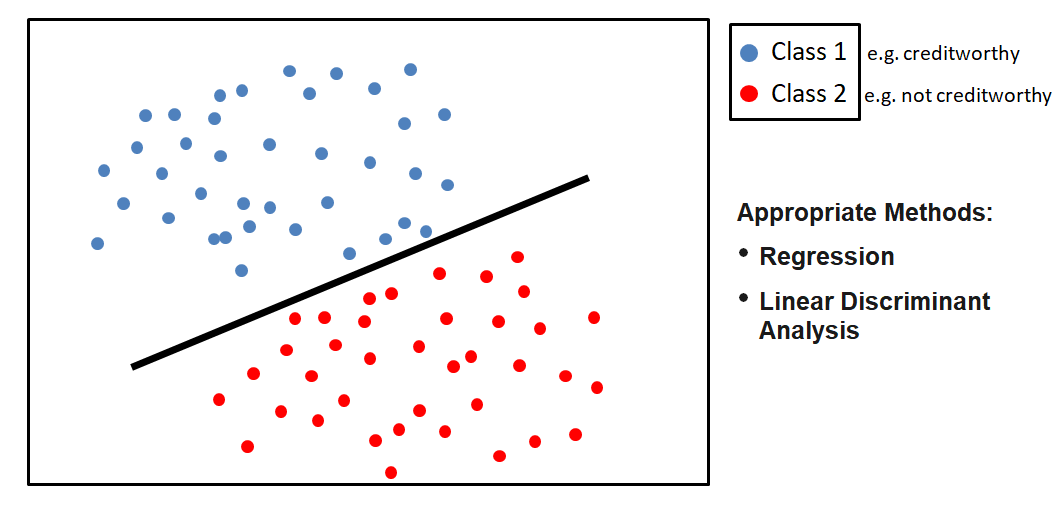

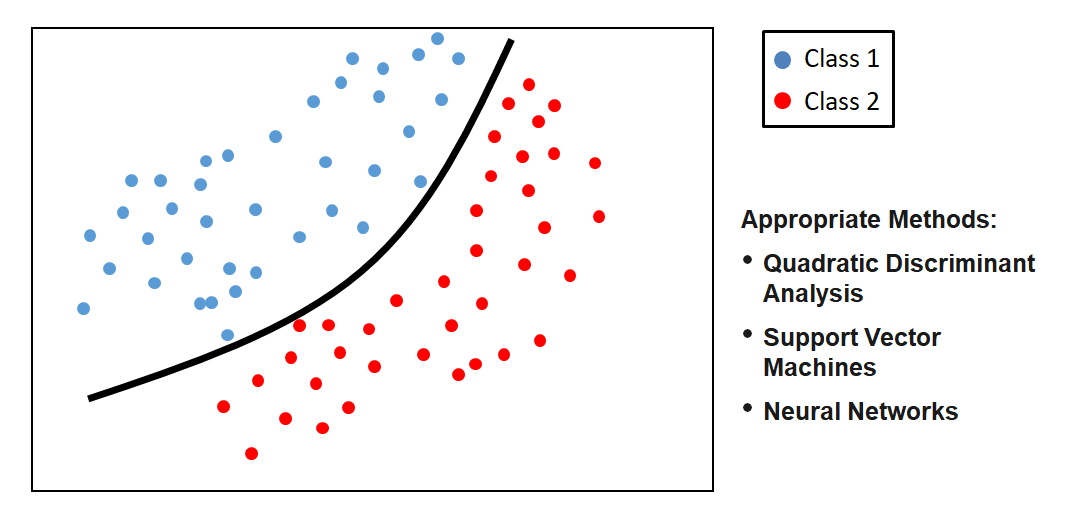

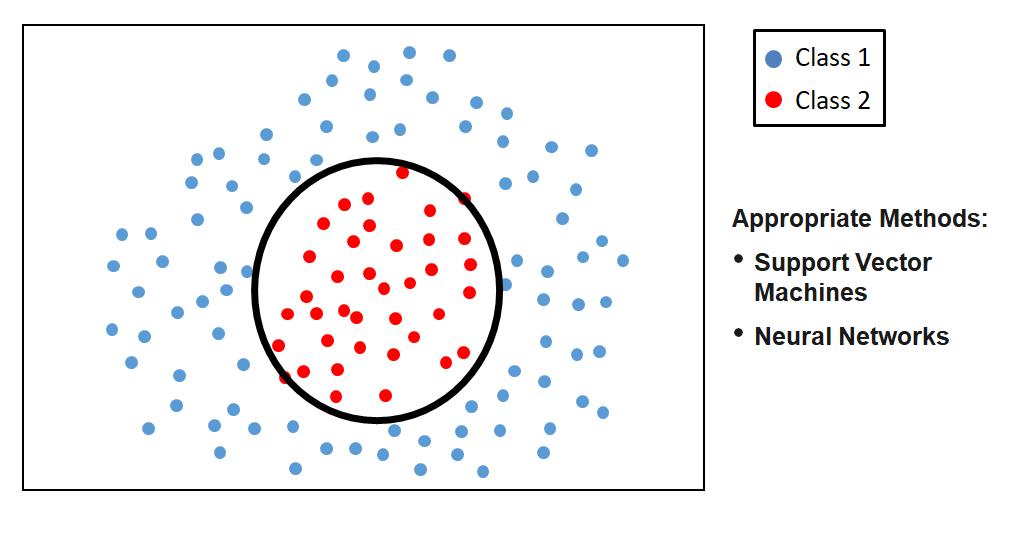

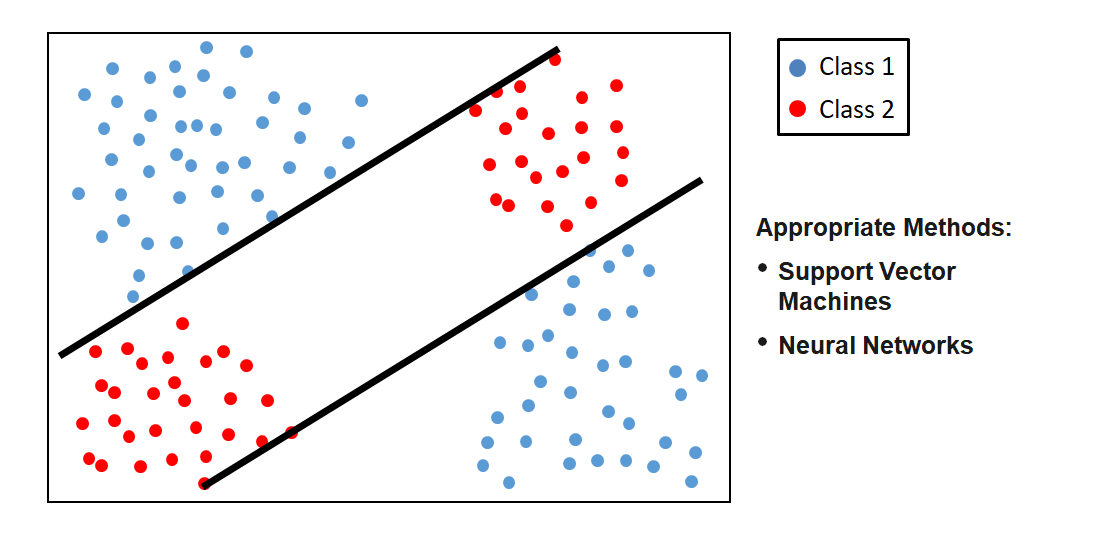

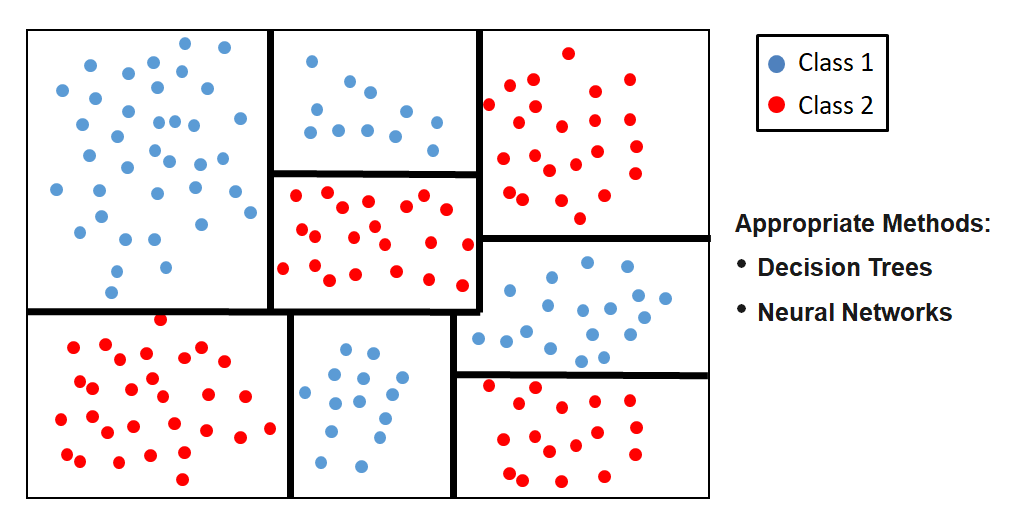

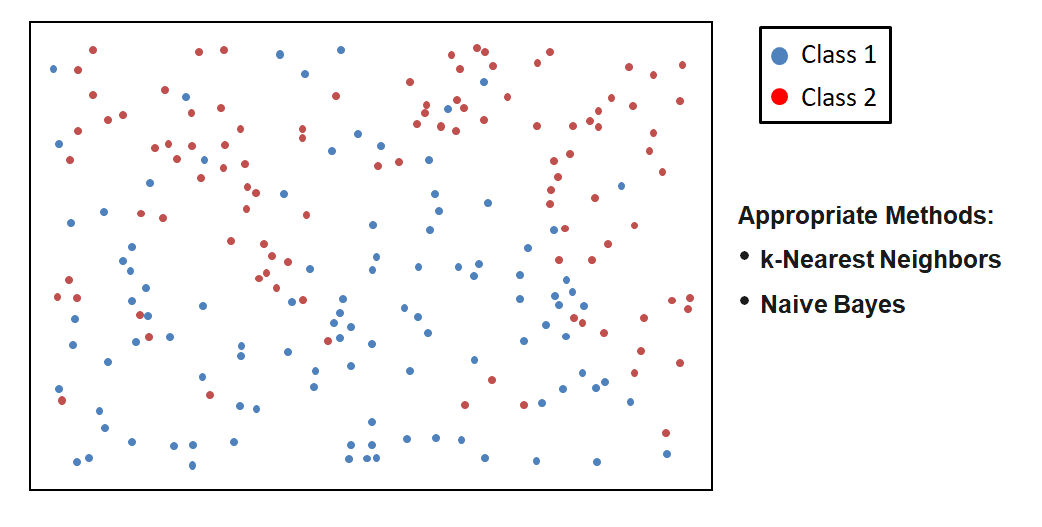

Which Method should I choose?

The choice of the method of data analysis depends on the one hand on the scope of application, but on the other hand on the interrelationships of the data to be analyzed.

In the Big Data area, data spaces are often highly-dimensional, making it difficult to visualize the interrelationships.

For this reason, the choice of the method can often not be made ex ante. In these cases, different methods are competitively tried to select the most suitable one.

Note: Emphasize the why behind different models: Different inductive biases, different levels of flexibility, different data needs.

Feature engineering is the process of using domain knowledge of the data to create features that make machine learning algorithms work. If feature engineering is done correctly, it increases the predictive power of machine learning algorithms by creating features from raw data that help facilitate the machine learning process.

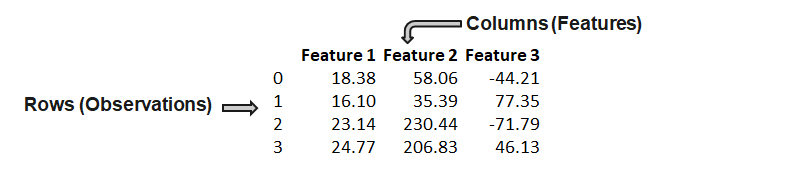

A feature (variable, attribute) is depicted by a column in a dataset. Considering a generic two-dimensional dataset, each observation is depicted by a row and each feature by a column, which will have a specific value for an observation:

Features can be of two major types. Raw features are obtained directly from the dataset with no extra data manipulation or engineering. Derived features are usually obtained from feature engineering, where we extract features from existing data attributes. A simple example would be creating a new feature “Age” from an employee dataset containing “Birthdate”.

1. Transformation

2. Type Conversion

3. Feature Combination

4. Feature Composition

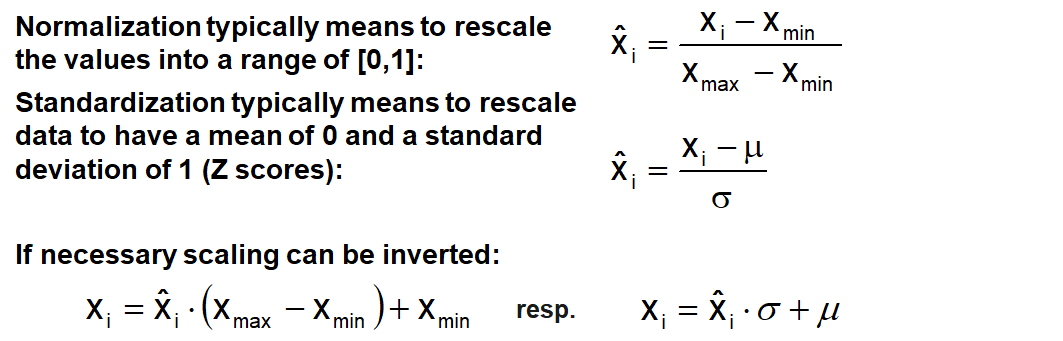

Most datasets contain features highly varying in magnitudes, units and range.

Most machine learning algorithms have problems with this because they use distance measures or calculate gradients. The features with high magnitudes will weigh in a lot more in the distance calculations than features with low magnitudes and gradients may end up taking a long time or are not accurately calculable.

To overcome this effect, we scale the features to bring them to the same level of magnitudes. The two most discussed scaling methods are Normalization and Standardization.

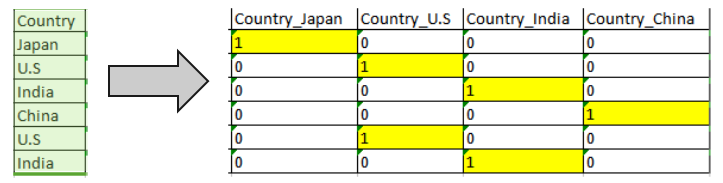

Many machine learning algorithms cannot work with categorical data directly. To convert categorical data to numbers, there exist two variants:

Label encoding refers to transforming the word labels into numerical form so that the algorithms can understand how to operate on them. Every categorical value is assigned to one numerical value, e.g. young → 1, middle_age → 2, old → 3. This only works in specific situations where you have somewhat continuous-like data, e.g. if the categorical feature is ordinal.

One hot encoding is a representation of a categorical variable as binary vectors. Every categorical value is assigned to an artificial binary variable. If the corresponding categorical value occurs in a data row the value of its binary replacement is equal to 1 else 0, e.g.

It is usual when creating dummy variables to have one less variable than the number of categories present to avoid perfect collinearity (dummy variable trap).

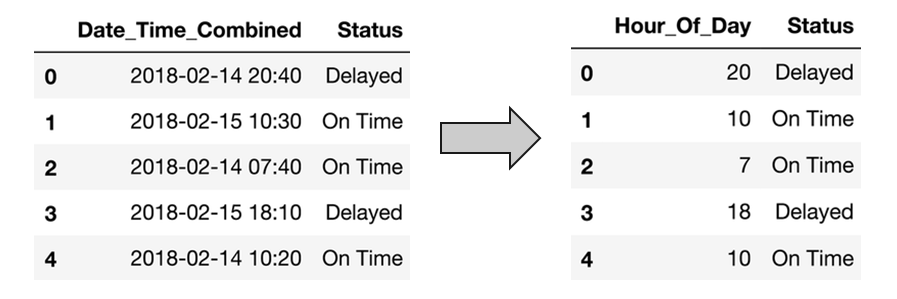

Data sets often contain date/time features. These features are rarely useful in their original form because they only contain ongoing values. However, they can be useful for extracting cyclical factors, such as weekly or seasonal effects. Suppose, we are given a data “flight date time vs status”. Then, given the date-time data, we have to predict the status of the flight.

But the status of the flight may depend on the hour of the day, not on the date-time. To analyze this, we will create the new feature “Hour_Of_Day”. Using the “Hour_Of_Day” feature, the machine will learn better as this feature is directly related to the status of the flight.

Suppose we are given the latitude, longitude and other data with the objective to predict the target feature “Price_Of_House”. Latitude and longitude are not of use in this context if they are alone. So, we will combine the latitude and the longitude to make one feature.

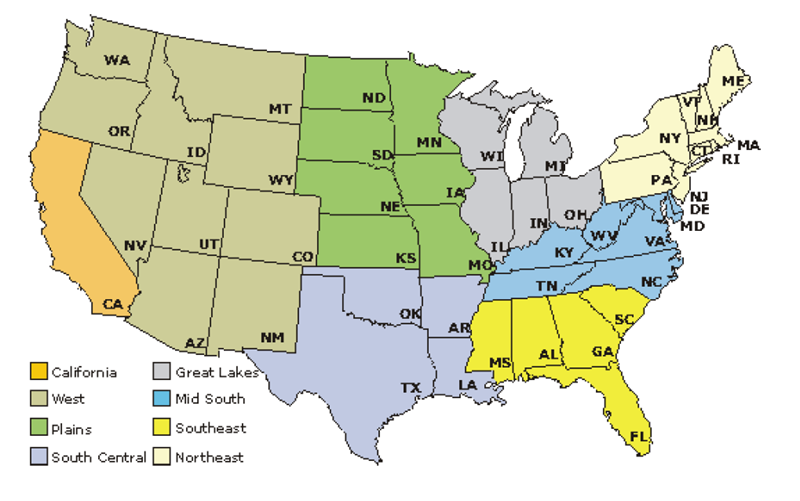

In other cases, it might be appropriate to transform latitude and longitude into categories which reflect regions, for example.

Suppose we are given a feature “Marital_Status” and other data with the objective to classify customers into “Creditworthy” and “Not_Creditworthy”. In the data set the martial status has many different values, for example:

To avoid a transformation into too many and maybe dominating dummy features, we can group the similar classes, e.g. in single, married, widowed.

If there exist some remaining sparse classes which cannot be assigned in a meaningful way they can be joined into a single “other” class.

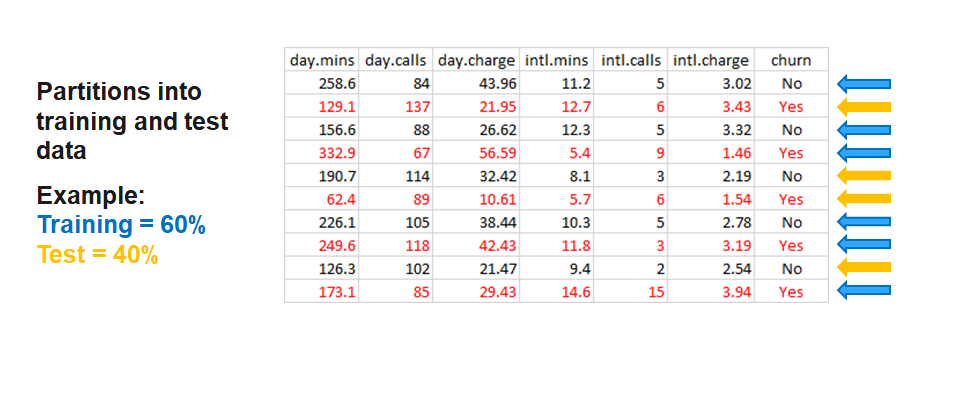

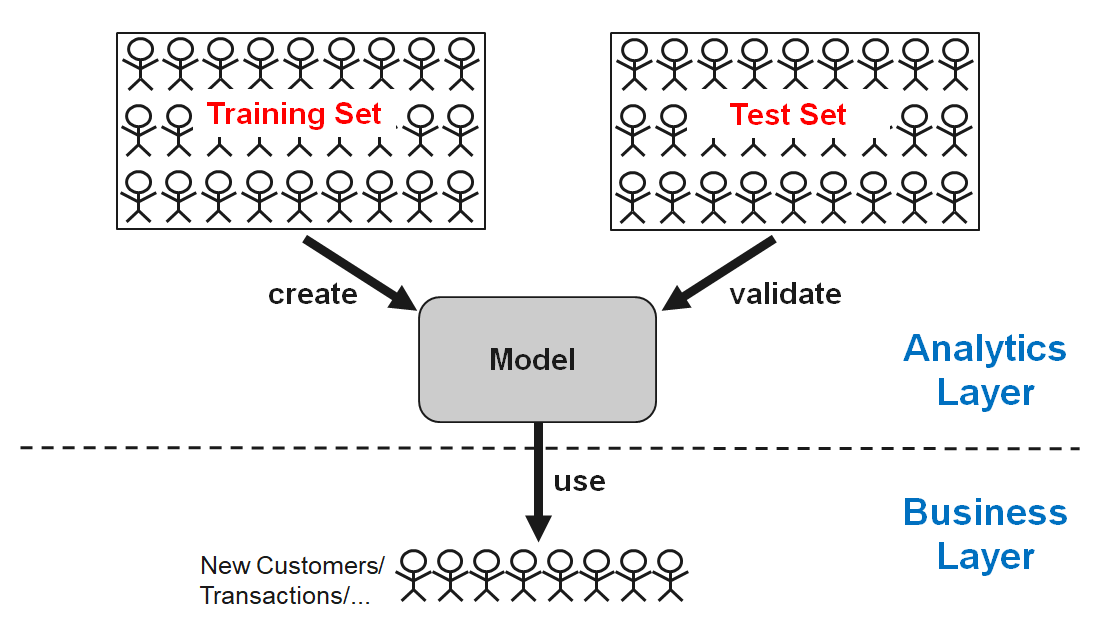

The partitioning of the data in Training and Test Data has the aim to proof if the analytical results can be generalized. The analysis (e.g. the development of a classifier) is carried out on the basis of training data. Subsequently, the results are applied to the test data. If the results are significantly worse than the training data, the model is not generalizable, which is called overfitting.

The partitioning of the data in training and test data can be carried out in the following ways:

Introduce classification performance evaluation:

TODO: cover cases where some error types are more costly than others

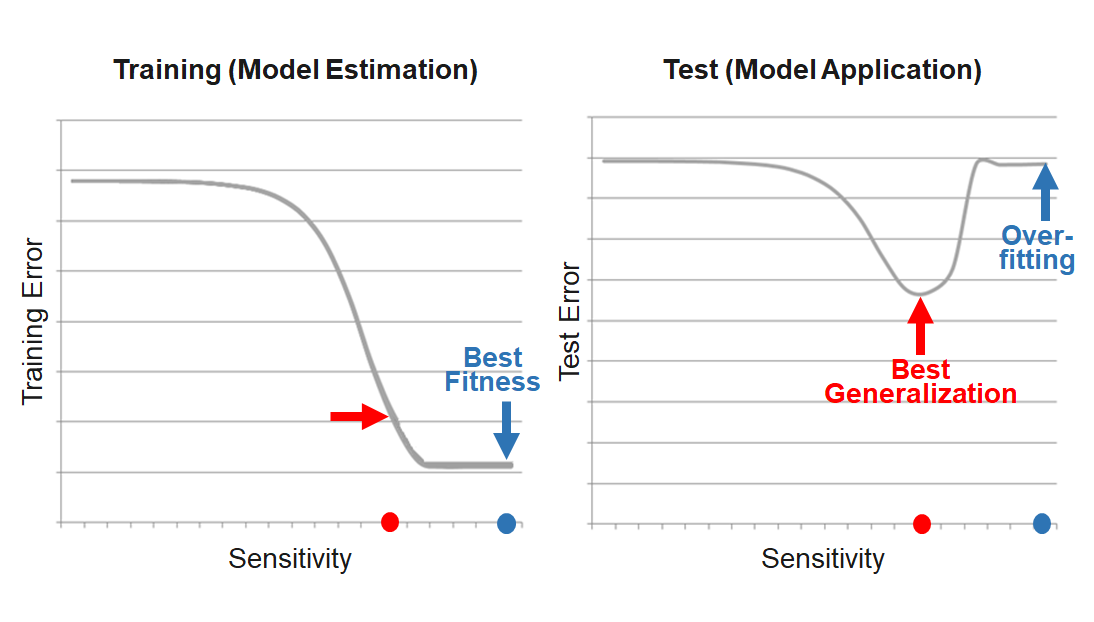

Due to the problem of overfitting, the main goal is to maximize the prediction quality and not to fit the data that is used for the model estimation as well as possible. This is equivalent to minimizing the risk that the model will have weak predictive ability.

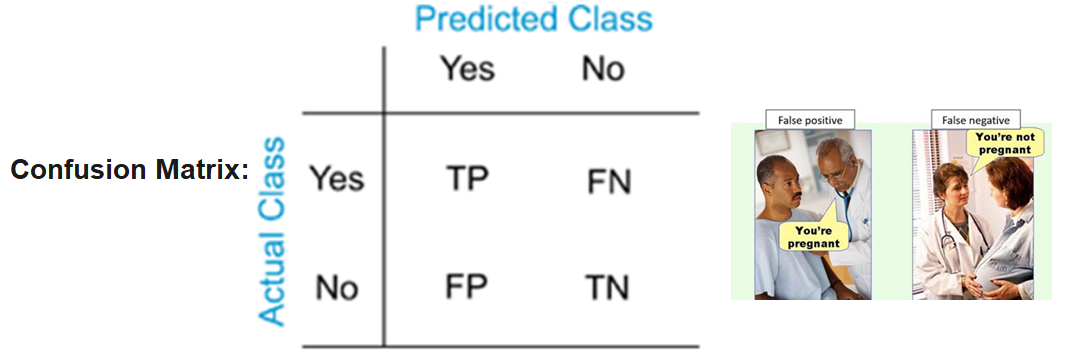

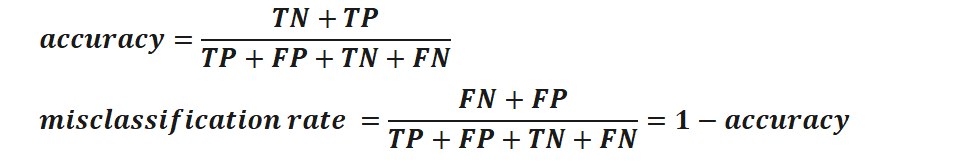

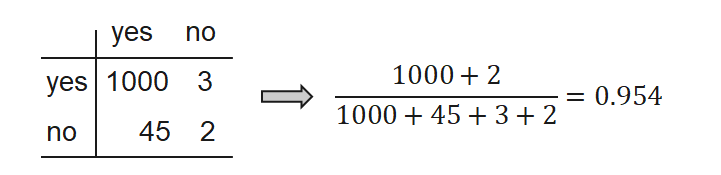

True positives (TP), true negatives (TN), false positives (FP), and false negatives (FN), are the four different possible outcomes of a single prediction for a two-class case. A false positive is when the outcome is incorrectly classified as “yes”, when it is in fact “no”. A false negative is when the outcome is incorrectly classified as negative, when it is in fact positive. True positives and true negatives are obviously correct classifications.

Test metrics are used to assess how accurately the model predicts the known values:

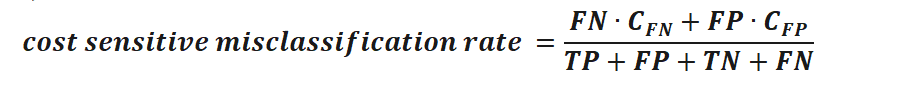

Most classification algorithms pursue to minimize the misclassification rate. They implicitly assume that all misclassification errors cost equally. In many real-world applications, this assumption is not true. Cost-sensitive learning takes costs, such as the misclassification cost, into consideration. Using costs, the error rate can be calculated via:

Misclassification rate and accuracy can be misleading, for example in the case of imbalanced samples. Extreme case:

For problems like this additional measures are required to evaluate a classifier.

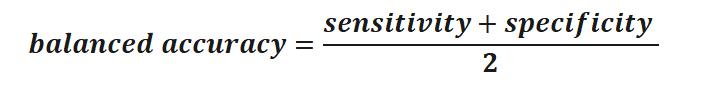

Sensitivity (true positive rate, recall) measures the proportion of positives that are correctly identified as such. Specificity (true negative rate) measures the proportion of negatives that are correctly identified as such.

Using both measures, we can compute the Balanced Accuracy.

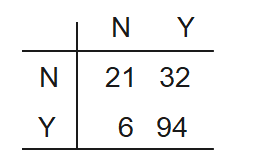

Assume the following case: A credit card company wants to create a fraud detection system to include it into their transactional systems. The outcomes should be “Accept” (Y) and “Reject” (N). Because fraud rarely occurs, the data set consists of 320 observations for Y and 139 for N. They are partitioned into training and test set. Finally, the model is trained and tested.

Because of the majority of the Y class, the training process concentrates on these cases because their correct classification promises the highest accuracy.

The results of the test of the model is consequently:

Thus, the model is blind for the N cases. But these are the ones of primary interest for the company.

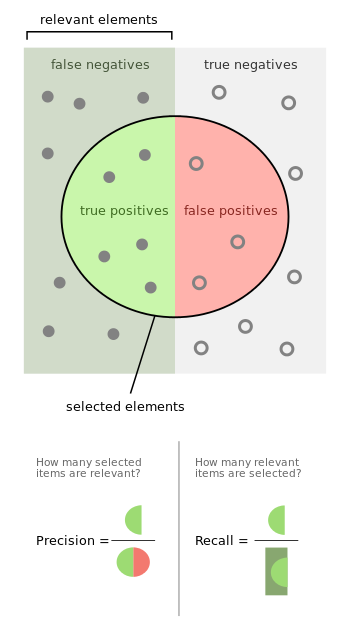

Precision measures the proportion of predicted positives who are true positives. A precision of 0.5 means that whenever the model classifies a positive, there is a 50% chance of it really being a positive. The higher the precision the smaller the number of false positives.

Recall measures the percentage of positives the model is able to catch. It is defined as the number of true positives divided by the total number of positives in the dataset. A recall of 50% would mean that 50% of the positives had been predicted as such by the model while the other 50% of positives have been missed by the model.

The F1 Score can be interpreted as the weighted average of both precision and recall. The main idea of the F1 Score is to strike a balance between both precision and recall and measure it in a single metric.

A F1 score reaches its best value at 1 (perfect precision and recall) and worst at 0.

It is commonly used in cases of high class imbalance.

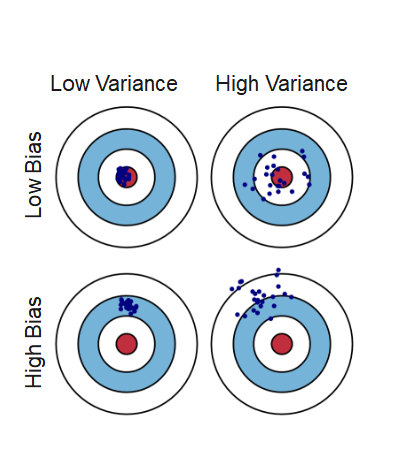

The prediction error is influenced by three components:

Error = Bias + Variance + Noise

Bias is the inability of the used method to learn the relevant relations between the inputs and the outputs. It reflects the method quality, e.g. if a method only produces linear models.

Variance represents the deviation resulting from the sensitivity of the created model to small fluctuations in the data.

Typically, there is a tradeoff between bias and variance.

Noise is everything that arises from random variations in the data. It cannot be controlled.